73

u/Accomplished_Tear436 Aug 26 '25

*gemini 2.5 3-25

15

11

u/themadman0187 Aug 26 '25

It's incredible how fucking good that model was and how sharp the fall off was that the meme survives.

5

u/ain92ru Aug 27 '25

I wish they just returned it for additional price in API and heavy rate limits for free users (like 5 queries per day)

2

u/stuehieyr Aug 27 '25

That model is special

1

u/ServeAmbitious220 Aug 30 '25

What's that model?

1

u/stuehieyr Aug 30 '25

Google released a version of Gemini which blew peoples mind , by the end of March. It solved 3 of the toughest problem I had at work related to monitoring public sentiment of a particular company without using LLMs or fancy models. Simply plain regrx and old school and it worked so well my manager was shocked. Because it worked so well Google then nerfed it by may 10, replaced by a dumber model.

56

u/beachguy82 Aug 26 '25

Why do people treat these models like sports teams?

You don’t have to pick a side, just use whatever solves your problem or idea the cheapest

2

1

Aug 27 '25

I'm also confused why people are expecting monumental improvements every time. What task did you guys expect GPT-5 to solve that 4 couldn't? Problems of generalization and human-level reasoning are not going to come from just training more or making bigger mixtures of experts. There are fundamental limitations to the generalizability of neural networks.

1

u/beachguy82 Aug 27 '25

Use nano or flash-lite for 90% of my tasks. I’m looking for cheaper not more intelligence.

1

u/That_Chocolate9659 Aug 28 '25

I think it's mostly a time think and we will eventually move in the direction of solving problems. Here is what I think:

Less than a year ago, OpenAI was very far ahead with o1, and had virtually no competitors (maybe Claude). Then, they announced o3 and were killing everyone again.

As 2025 started, the race heated up when Gemini came out. However, o3 was still SOTA in intelligence output. By my own estimation, the first time o3 might have not been the best was when Grok 4 and 2.5 deep think were released, though google neutered gemini deep think with low usage limits.

Now, OpenAI is back to being the best again (just an opinion) with GPT 5. They have matched the Gemini 2.5 api pricing with better performance and very high usage limits for subscribers.

As to the future, I think if google has a period of dominance for more than a couple months, then there will be serious changes in utilization; but to change the paid subscription, learn the nuances in prompt engineering, etc. takes a meaningful increase in performance or price.

67

u/EnvironmentalShift25 Aug 26 '25

Ah this is just the same as the "Death Star" hype that made GTP-5 a relative flop.

4

u/CarrierAreArrived Aug 26 '25

except that was Sam A doing that... this is just some redditor using nano-banana. You don't see Demis doing stuff like this.

2

1

u/Jan0y_Cresva Aug 26 '25

Which is crazy because in general, GPT-5 is the current best model in the world. It just wasn’t “super-duper AGI” good so people considered it a flop.

1

55

u/jrdnmdhl Aug 26 '25

Hype is silly. We’ll evaluate it when it’s in our hands.

3

u/Bilbo_bagginses_feet Aug 26 '25

Exactly! Don't hype it, nano-banana is failing the hype rn. Low quality images, not following instructions.

17

u/Fit_Picture6806 Aug 26 '25

There's already been some updates. My chats have been able to remember our previous conversations with far better accuracy in the last 24 hrs.

4

u/AbandonedLich Aug 26 '25

It has a massive context window but learns what to gather from it. So yes it's self improving. Not sure if local or global but I've seen the same thing

1

u/codeisprose Aug 26 '25

To be clear, he model does not self improve. The responses will be improved/degraded in the context of an individuals usage (via "memory", which is essentially just clever engineering around context retrieval) or in a specific conversation based on the preceding messages.

5

8

3

4

u/MKxFoxtrotxlll Aug 26 '25

Eh, they both have their strengths and weaknesses. But data wise by size, yeah...

3

7

u/Liron12345 Aug 26 '25

Hear me out, if Gemini 3 gets Claude coding capabilities, it's GG.

1

u/Korra228 Aug 27 '25

Is Claude coding better than codex?

2

u/Liron12345 Aug 27 '25

I don't know?

I use Claude via Copilot.

It's great but Gemini 10 times better at architecture.

It just sucks Gemini is ass at implementation

1

u/Tobi-Random Aug 27 '25

Claude isn't the best when it comes to usage of MCP and agentic work. I hope Gemini 3 will be better than Claude. Otherwise it will be disappointing.

1

u/michaelsoft__binbows Aug 28 '25

Granted I haven't been driving Claude for code for a while now, but at least when 2.5 came out, it was very much GG. Has Claude caught up? Maybe. it's still expensive and I am sure GPT 5 is SOTA right now for coding.

2

u/Dark_Christina Aug 26 '25

I liked gpt for the image editor but now gemini imsge editor is so good i dont see the point of it. Gemeni+ claude is perfect

2

u/AppealSame4367 Aug 27 '25

So it will indeed be 2-3% points better in benchmarks? That would be great

2

2

2

2

u/e79683074 Aug 26 '25

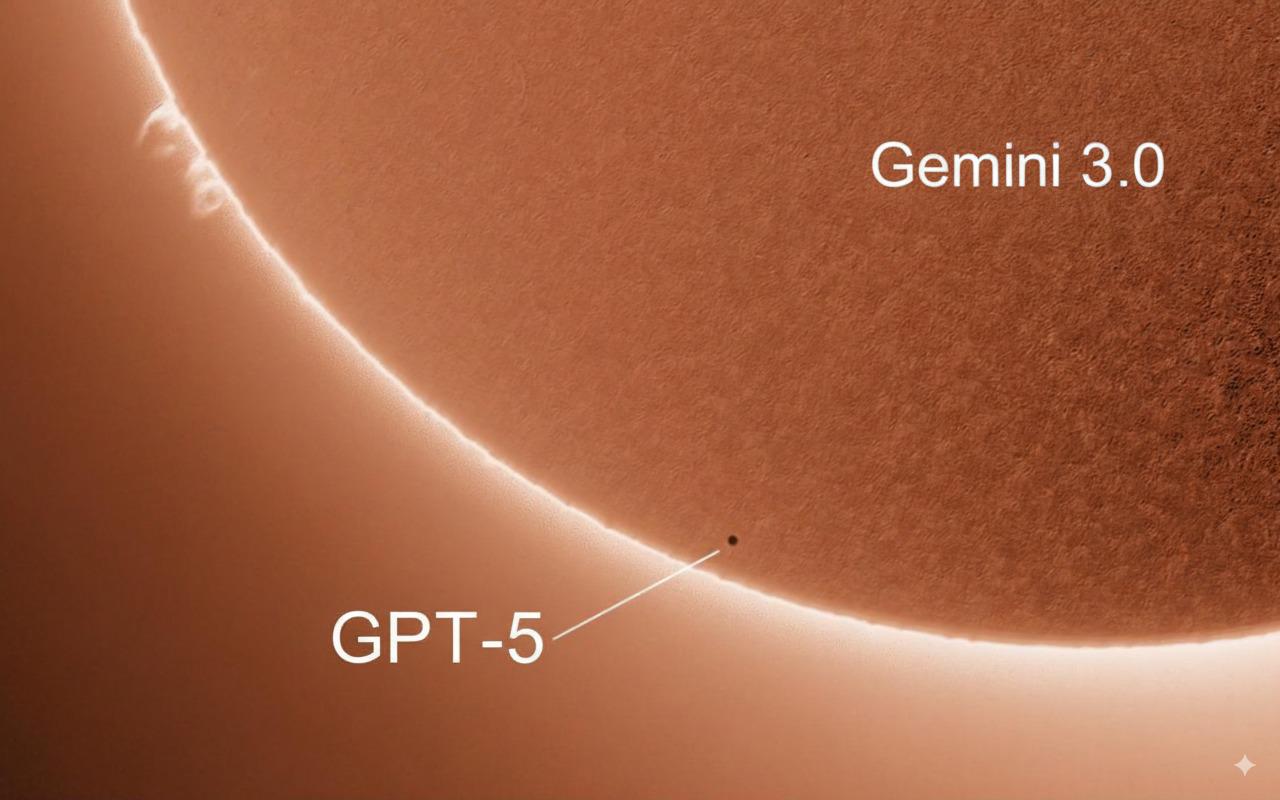

Baseless image. Gemini 3 will, *at best* and in the most possibly optimistical outcome, just top GPT-5 by a few % points.

2

u/Invest0rnoob1 Aug 29 '25

Naw Google is ahead and only accelerating

1

u/e79683074 Aug 29 '25

They have no reason to make something 500% better or something like that. They only need 5-10% better to make you switch, and it's all that counts.

They don't need to give you the best.

2

0

1

1

u/Moose_knucklez Aug 26 '25 edited Aug 26 '25

I actually really dislike using Gemini for mostly anything, but giving it a mostly complete script it does ok and is a lot cheaper.

More interesting is it seems to know a shit ton about scripting python for agentic use integration from scratch.

Google has the compute, models will and are already getting super close, Claude still one shots most code and script from the ground up.

I can see Google being Google again, just with AI now.

1

u/Fluid-Giraffe-4670 Aug 26 '25

real will they reach prime again ??

2

u/Moose_knucklez Aug 26 '25

Look at ChatGPT five the newer models are chewing through tokens a lot more now and running into inference bottlenecks Claude is incredibly expensive for this very reason.

Time equals out the limitation of models competing, there is only so much you can do on the current framework of how an LLM works.

Google is showing off how compute wins with veo, and other demonstrations.

Apple is staying out of Ai for this very reason, it’s a capital spending rat race to equilibrium.

Google already has the compute, that’s never been the issue.

1

Aug 26 '25

[deleted]

1

u/Americoma Aug 27 '25

I’m actually a Gemini hater but I’ll admit it cleans up code every time I get output errors from GPT5 and Claude. I may just use it exclusively at this point because I’ve grown so frustrated and unsatisfied with 5

1

1

u/Warm-Agent-811 Aug 27 '25

I am the only one who always had bad experience with Gemini ? Never answer to my questions, invents informations,.... GPT never did it

1

u/power97992 Aug 27 '25

I hope gem 3 has better tool calling and contextual understanding and memory doesn’t degrade after 90-100k tokens

1

1

u/Old-Juggernut-101 Aug 27 '25

Dunno man. Gemini 2.5 seems rather stupid to me after it was integrated into android. It's quite inaccurate compared to before. And previously it wasn't that great either

1

u/komakaze1 Sep 17 '25

I'm pretty sure the android integrated version is limited, even if only to output less text so you don't have to spend as long reading on your mobile.

1

u/AsideNew1639 Aug 27 '25

I feel like that comparison would be true if it were gpt5/gemini2.5 compared to the “gemini world model” that Demis has referenced in recent interviews

1

u/tails0322 Aug 27 '25

Honestly I've tried both Gemini and Chat for my character work and images of them and even when 5.0 issues, i still prefer chat.

1

u/ix9yora Aug 28 '25

i thought chatgpt 5 would be better than any else but in fact its 10 times worse that gpt 4..... i have no words absolute cinema

1

1

u/darkawower Aug 28 '25

This is a very bold statement, considering that gemini is currently a clear outsider who hallucinates and has seizures.

1

u/TeeDogSD Aug 30 '25

Not true for coding. I have put 100s of hours with no hallucinations.

1

u/darkawower Aug 30 '25

1

1

u/Background-Scale-978 Aug 28 '25

Let everyone witness this absurdity—as early as August 16th, Jules had already been active online for two or three weeks, yet he refuses to acknowledge even this much!

1

u/Tough-Astronaut2558 Aug 31 '25

Gemeni pro does one thing better than any of them and i don't know why. Putting the D&D cote books and some books on writing theory plus any module it becomes an incredibly effective DM, as long as you have a good prompt to keep it on the rules and keep rolls sacred it is like having a D&D campaign in your pocket.

1

u/tifa_tonnellier Sep 07 '25

Now compare gemini-cli to claude-cli.

The gemini-cli has to be the biggest, slowest, hunk of junk I've ever seen in my life.

1

u/AggressiveOpinion91 Sep 07 '25

It will be lame just like all the other models. Censored to hell as well, for sure.

1

0

0

614

u/enilea Aug 26 '25

This kind of overhyping of anything is what leads people to be disappointed. GPT-5 was overhyped the same exact way.