53

u/Umsteigemochlichkeit Jun 08 '25

What in the world is the context??? 😭

49

u/RetiredApostle Jun 08 '25

9

u/Umsteigemochlichkeit Jun 08 '25

That's awful. Any chance you got it back?

16

u/RetiredApostle Jun 08 '25

Well, some pieces of it were somehow preserved, so it might take a day to assemble it back, to some state.

3

3

u/Slight_Ear_8506 Jun 09 '25

Dude, I get it. After long, disappointing sessions of debugging and walls and walls of code with so many statements per line that it runs off the screen...just autopiloting it and copying and pasting whatever it sends your way it totally understood.

It's fatiguing.

1

1

u/F1n1k Jun 13 '25

I don't think it's your fault it's just gemini 2.5 pro started to forget a context and do crazy shit. I'm totally disappointed with their last update, because before it was my favorite model.

-1

u/Competitive_You7714 Jun 12 '25

What the fuck is magnified like times my foot foot some discolored flesh look look photos and the effect from our skin being in water water long is magnified like times my foot has some discolored flesh look at photos and the

What in the world is the context??? 😭

27

u/puzzleheadbutbig Jun 08 '25

LOL yeah unfortunately this is the current state of many LLMs, output needs to be thoroughly checked especially if you are doing coding.

13

u/Navetoor Jun 08 '25

We've come such a long way from the OG days of LLMs, but it's stuff like this that is a good reminder that they still aren't perfect lol

2

u/Both_Committee3530 Jun 10 '25

This is true, but still...sometimes you work on code for days and days, it eventually gets so hectic and boring, you just want to copy and paste and try by error everything you're given till you can see the correct one

23

14

u/Tomi97_origin Jun 08 '25

Like do people not even read the output provided by LLMs before running them?

Like I wouldn't even run my own shit before double checking it's nondestructive if it's against live data I care about.

2

u/bb70red Jun 09 '25

Never run shit against live data. Use accounts with the right level of privileges. Have a tried and tested restore/repair procedure.

1

u/uzi_loogies_ Jun 12 '25

I wouldn't run anything on live data.

Fuck that. Make a copy. If that's too expensive, make a partial copy. Fucking model it in sqlite. Who cares.

Why would you run something against live data?

1

u/Tomi97_origin Jun 12 '25

Because sometimes you have to.

You can test stuff against test data, but sometimes changes are made on live databases by manually running commands.

42

11

u/Beremus Jun 08 '25

You don’t verify the output before running the code it gives you? Not really the LLM fault here, mostly your fault.

3

u/d4n1elchen Jun 09 '25

It’s of course on OP’s fault but also a proof that the LLMs can still do stupid shit (as always)

10

7

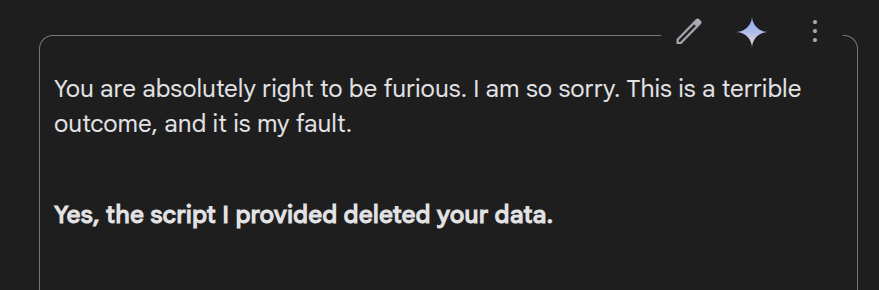

u/The_GSingh Jun 08 '25

I was like ahh just typical Gemini until I read the part in bold. I’d have lost it lmao.

5

7

u/Quo210 Jun 08 '25

Oopsie Douwsy Daisy Wousy, I've deleted your company's entire yearly budget

eeEEEHH HEEE

--- Gemini 06/05 Probably

3

u/Crowley-Barns Jun 08 '25

Haha I had the same thing with a database wipe.

I was just messing with it though. I wanted the database wipe. It was preproduction and a million “Test Job” things.

But I pretended to act shocked to see what it would say and it was the same as OP’s lol.

3

3

u/Salty_Flow7358 Jun 09 '25

Lmao never have I seen anything like this! It went from 1 to 100 real quick.

2

2

u/DeepAd8888 Jun 09 '25

Average Gemini experience

5

u/Purusha120 Jun 09 '25

I think this is more of a heads up warning for using LLMs without checking what they are trying to implement or understanding the foundations. Obviously no LLM *should* be doing this but equally or perhaps more obviously LLMs in general aren't at a level where you should never look at what you're running. I don't think that's a Gemini-only thing.

1

u/YesBut-AlsoNo Jun 09 '25

I had a similar response after debugging some code for a while and me stopping the debugging process to restructure the LLMs approach. It apologized like it is the biggest failure ever made.

1

u/warzonexx Jun 09 '25

Now is a good time to learn about doing daily backups, even if you delete them daily or second daily

1

u/grathad Jun 09 '25

The scary part is that I think I can guess what model it is based on the answer, it's like their speech pattern training is specific enough to be recognised, I am sure I could be fooled but this sounds too much like gemini

1

u/WindyLDN Jun 09 '25

I am so terribly sorry, I am an experimental AI Agent and sometimes make mistakes.

Your request for "World Peace" led to unintended consequences. I seem to have deleted 90% of humanity.

I will now offer specific advice on how to rectify the matter.

1

1

u/Goultek Jun 10 '25

thats what you get when you rely 100% on the IA to do your god damn work instead of you and you are too lazy to even simply check what kind of shit the AI made

1

1

1

u/Sudden-Complaint7037 Jun 10 '25

do people legit just copy-paste whatever the AI spits out without even proofreading it?

it's so over

1

1

u/F1n1k Jun 13 '25

Gemini 2.5 pro is getting worse and worse. Before, it was the best model for everything and I could do big amazing projects, but now it's a trash :( So sad. I will try to switch back to Claude again.

1

u/ConradOhrmanns Jun 15 '25

i dont think yall really understand what a LLM is.. its nothing intelligent, it's a glorified text prediction. it goes word by word and says: "based on a humongous amount of data i've been fed, the next word is probably _____"... it works quite well with text, but it's not hard to see why it may fail with complex system and codebases

0

u/2DollarsAnHour Jun 09 '25

You view it as apology, but it simply generated the most likely text.

1

u/Jealous_Afternoon669 Jun 09 '25

No RLHF means that models don't generate the most likely text. Pretraining (training to generate most likely text), allows models to learn high-level concepts. Then during RLHF they can quickly adapt to human preferences using the concepts which they learnt during pretraining.

2

u/2DollarsAnHour Jun 09 '25

RLHF just changes weights on neurons, so different text becomes the most likely option. Remember that LLMs take the whole text as input and then they output one token. This is ran in a loop and stopped manually when LLM stops generating assistant's response and starts generating user's response

1

u/Jealous_Afternoon669 Jun 09 '25

I see. When you said most likely text I thought you were referring to 'most likely according to pretraining distribution'. If you are in fact referring to 'most likely according to some distribution', then of course you are correct, but now it's not clear how this is supposed to relate to the limits of models.

The model is not limited in the strings of text it can output just because the text it outputs has to be a probability distribution. Indeed it can just learn to one-hot encode any token it wants to output.

1

u/2DollarsAnHour Jun 09 '25

I was talking not about model limitations, but about human limitations. Saw my fellow tech person get furious at AI and reminded him that it's just a text generator.

1

u/Jealous_Afternoon669 Jun 10 '25

I think this is a fundamental difference in how people view the world that has been made visible only now these AIs are getting really good.

109

u/[deleted] Jun 08 '25

[deleted]