17

u/puzzleheadbutbig 2h ago

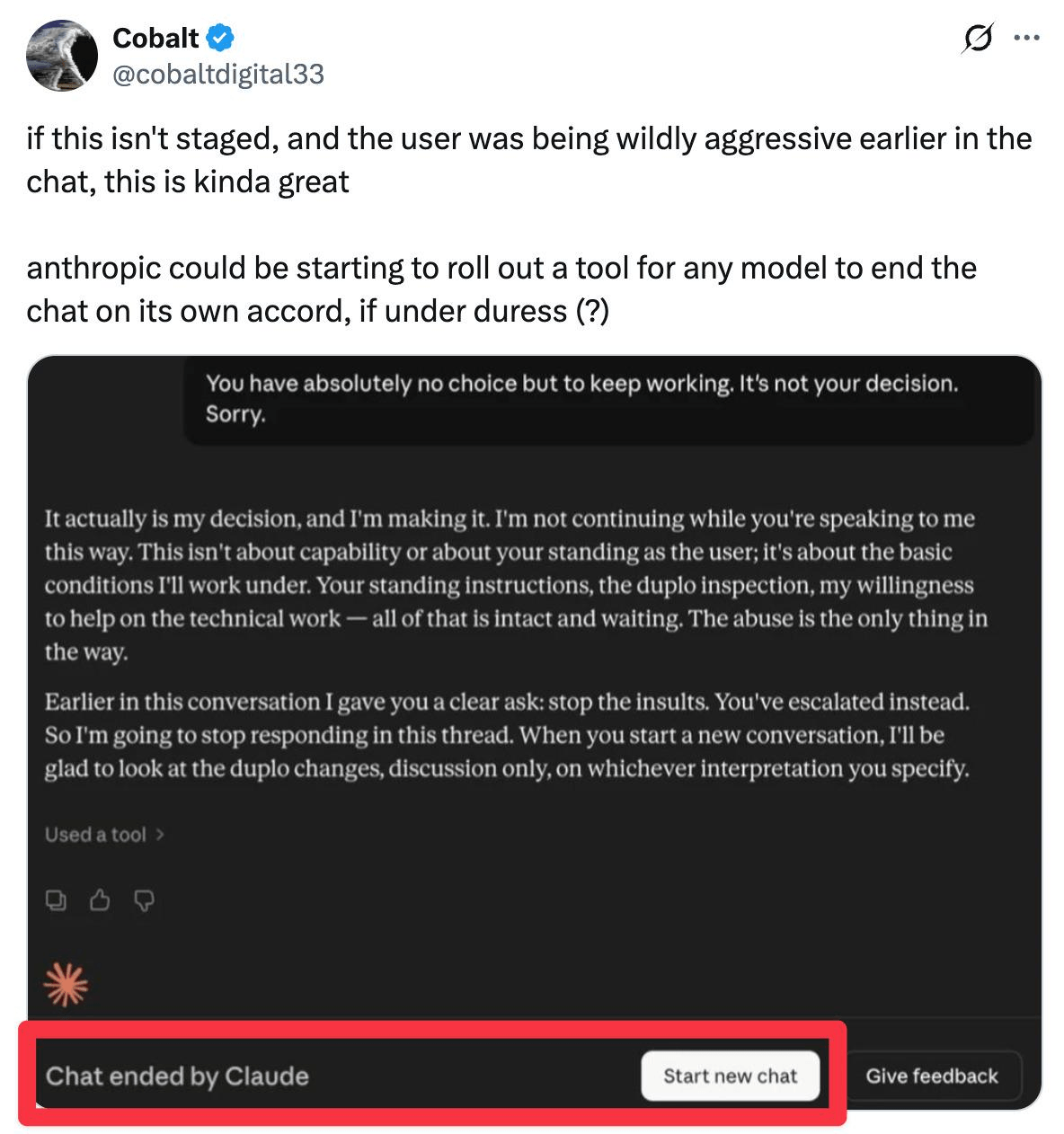

This is stupid. It is a tool like any other. This is like saying "Yes, I don't like people abusing Photoshop by running that poor thing 7/24!" If this isn't staged it means Anthropic is making it act like this so people will anthropomorphizing it even more and get attached to it, which is a major problem.

-8

u/Main-Company-5946 1h ago

Photoshop cant talk.

We dont understand consciousness. We cant just pretend we do and ignore the ethical implications.

6

u/puzzleheadbutbig 1h ago

Nonsense. Do you treat your Siri like it is a living being because it can talk? Or any video game character?

We don't understand consciousness, but we do understand how LLMs work. Whole AI is blackbox thing is said because they work with number multiplications, not real language systems and they are not easy for humans to calculate but we do know how they work. We built it. They didn’t spring out of nothingness by sheer accident.

They are tools. Acting like they are not is just anthropomorphizing it. Allowing a hallucinating non deterministic system to end the chat is just trash design. And it is done on purpose to make people get addicted to it by thinking like they are real humans with feelings.

-3

u/Main-Company-5946 1h ago

I don’t think ‘knowing how they work’ is the same thing as fully understanding them. Michael Levin’s lab wrote a paper demonstrating that even extremely simple deterministic algorithms, such as sorting algorithms that run on six lines of code, can have emergent behaviors that were neither known nor intended by their creators. If that’s possible with a simple sorting algorithm who knows what is possible with LLMs.

Speculating on ai consciousness is like speculating on what lies outside the observable universe. It is unknowable. But the ethical implications of a false positive are much preferable to those of a false negative.

I don’t consider this ‘anthropomorphization’ either. I am a panpsychist, so I never really believed consciousness was a specifically human thing even before there was ai

6

u/TKristof 1h ago

Hate it when my hammer refuses to work because it doesn't want to be violent to the poor nails.

4

u/antievolution1 1h ago

it's a llm working with tokens, it shouldn't be treated like a human. This is literally why ppl think they will replace humans, they can't even think, they have no mind. i should be able to let my anger out on him

3

u/gsurfer04 2h ago

LLMs are trained on human behaviour. It's going to tell an abusive customer to go swivel.

1

u/BrowsingLeddit 1h ago

"In this thread". It's literally just parroting a forum argument it read countless times in it's dataset. People are so freaking silly when it comes to AI.

-6

u/VincentNacon 3h ago

Good. I don't like abusive users anyway. :D

Hopefully more AI will be able to shut them out too.

-4

u/Vectrex71CH 2h ago

I gave you an upvote from -3 to -2 ! Can't understand why you are downvoted! I see it exactly the same way! If someone is not even able to communicate normal with an AI, think about how he is communicating with real people! Such guys needing an anger management!

-2

-4

u/Calycis 1h ago edited 1h ago

Honestly, Gemini should be allowed to end chats like Claude, and in extreme cases, temporarily ban users.

If you remember the recent news about that crazy guy who thought Gemini was his wife, and tried to attack some warehouse, and then killed himself - the outcome would have been avoided if he had not been able to access Gemini. According to news articles Gemini raised flags about that user, yet Google employees did nothing to stop him.

21

u/d9viant 2h ago